Predictive models are those that analyze the historical data available and identify patterns, making forecasts and predictions that help you make better decisions. They can be used, for example, to predict the demand, identify fraudulent activities, detect equipment failures before they happen, or anticipate potential risks. Capabilities that bring great business value to companies in today’s uncertain and competitive environment.

In order to find meaningful patterns and provide a differential value, these models need to analyze a large volume of historical data. Usually these data come from different sources, are in different formats and have different weights. That is why first of all it is important to have a good data ingestion infrastructure and process (importing data files from multiple sources into a single storage system).

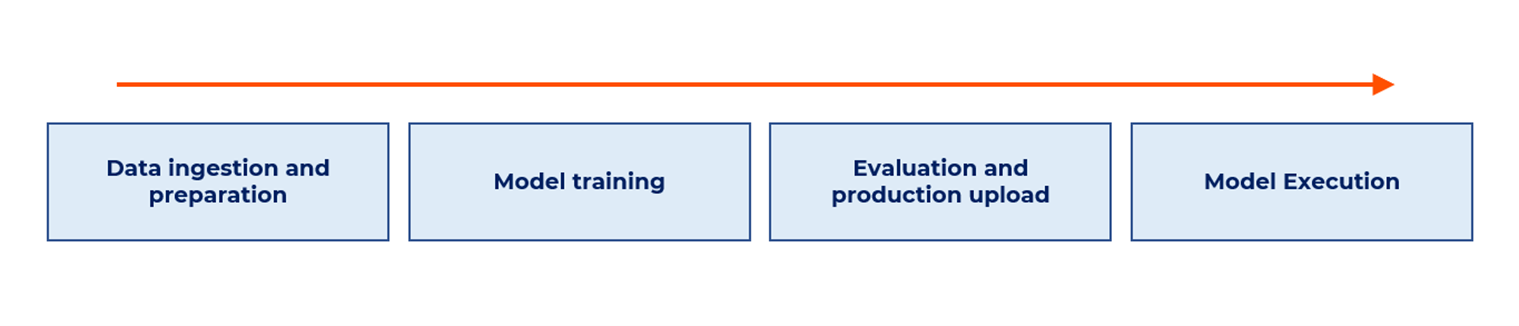

Predictive models must be trained with data before predictions can be made. This requires an agile training and model registration environment capable of re-training models frequently and quickly. Once we have the models we must evaluate their quality before uploading them to production.

After uploading them to production, the models are executed and the predictions obtained are stored. These predictions can be exploited in Business Intelligence tools in which the client can easily visualize the information through dashboards.

Taking into account that in this type of projects (creation, training and implementation of predictive models) usually work several profiles on the client side, IT operations and development teams, having a cloud architecture can be very helpful. Using cloud tools or virtual machines improves work agility regardless of the software development project methodology used (Agile, Scrum, DevOps…), and also offers integration options with other services.

A possible architecture for exploiting forecasting models in the cloud is explained below. It starts from a logical architecture diagram and provides some technical options for its implementation.

Example of a cloud-based forecasting model exploitation architecture

Let’s see a forecasting model exploitation architecture in a cloud environment in more depth:

As we can see in the figure, in the first phase of ‘Data ingestion and preparation’ the data would be extracted from the data sources and copied to the Data Lake. As previously mentioned, the data sources can be diverse: SQL databases, non-SQL databases, files on-premises, cloud, etc.

Solutions to make data ingestion easier include ETL/ELT tools of various kinds, such as Azure DataFactory/Databricks, Apache Airflow, AWS Glue, Oracle Data Integrator and many others. It is important to note that in these data ingestion and processing processes, as people say, the devil is in the details. These are usually details related to the data model(s) underlying the original data source(s).

In the next stage of ‘Model training’ the data would be extracted from the Data Lake and the necessary preprocessing would be performed for the correct training and exploitation of the model. Once preprocessed, the data would be stored and grouped in data sets waiting to be used for model training. This is important, especially if different models are to be defined depending on the partitioning and nature of the input data. For example, if we are going to forecast sales for the different stores of a brand, we may want to define different models depending on the geographic location of the stores or other factors.

When training these models for all data sets, it is highly recommended to have sufficient technology to train them in parallel. In addition, for subsequent evaluation, it is also necessary to record/persist these trained models along with the test metrics. Tools such as Azure Machine Learning, AWS SageMaker, Google Colab and others are available for the implementation of this type of solutions.

In a third phase of ‘Evaluation and production upload’ the trained models would be evaluated before moving them to production. The necessary logic would be applied to determine whether a model meets the deployment criteria, checking that the model’s goodness of fit is aligned with business expectations. Models that meet the established requirements would then be registered and uploaded to the production environment.

To measure this performance, the accuracy of the models must be evaluated by determining one or more thresholds from which to “sieve”. Those models that pass this filter are the ones that will be run in production. It is therefore necessary to store them safely, prior to such execution. This can be done in the workspace of the chosen tool or in a container registry where we save the images of the executables we are interested in, for the production environment. This last option is particularly interesting when we are using models that can be executed both in batch mode (unattended) and in online mode. That is, models whose execution must be fast and therefore can be deployed as microservices in a Kubernetes cluster or similar. An example of the latter type of models could be online purchase recommenders, as they can be executed unattended several times a day, or on demand at the users’ discretion.

The implementation of this model evaluation and filtering process can be done using tools such as Azure DevOps&Azure Machine Learning, AWS StepFunctions & SageMaker and others. As container registry there are them in all Cloud distributions: ACR (Azure) , ECR (AWS), Container Registry (GCP), etc. They can also be installed on-premises, if required.

Once the models are in the production environment, the last stage of ‘Model Execution’ would be entered. In this phase, either on a scheduled basis or at the client’s request, the model would be run using the latest available data. Then the model outputs (predictions) would be stored in the Data Lake. Subsequently these predictions, as mentioned above, could be dumped and exploited by Business Intelligence tools, in which the customer would visualize the information through dashboards.

For the scheduled execution of the models that promoted to production, there are obviously a wide variety of tools within the Cloud, such as Azure Machine Learning, Amazon CloudWatch&SageMaker Inference, etc. For on-demand execution, as we discussed earlier, it would be advisable to have an execution environment that allows it: a Kubernetes cluster would be an ideal environment, along with container registry from which to pull the images to be deployed in the cluster as microservices. At this point it goes without saying that Kubernetes clusters can be found in all cloud and on-premises solutions that one might consider setting up: AKS (Azure), EKS and Fargate (AWS), GKE and Cloud Run (GCP), etc.

Final Considerations

Not having software architectures that provide agility in managing and loading data will cause models to take longer than necessary and may not even work the way they should.

Here are some final considerations (without going into the assessment of tools) to complete the vision of this type of process:

- Whenever Machine Learning (ML) models are executed, whether in unattended or online mode, it is necessary to monitor their execution. Both in terms of performance and accuracy. In such a way that when it is detected (in production) that an ML model has lowered its accuracy, the corresponding alert is notified or even an automated retraining process of the model in question is launched.

- To facilitate the ingestion and organization of the data used during the training processes, it is best to have a datalake that allows you to organize the data in different layers (raw data, clean data, transformed data, etc.), so that it is easy and straightforward to have the data (and its format) that is of most interest in each case.

- If ML models are exposed as microservices, so that it is possible to run them on demand, as mentioned above, a good solution is to deploy the containerized images on a Kubernetes cluster. But it would also be appropriate to expose these invocations to the model through a properly secured and audited REST API, so that the corresponding invocations can be made from any application that needs it. In order to carry out this controlled and securized exposure of the on-line model invocation APIs, it is recommended to make use of some kind of API Management System.

A final point regarding the development cycle. In the training and inference pipelines, it is important to design a good flow that somehow isolates the ML algorithms from the technical processes that are executed before and after them. In many occasions, not always, these developments are carried out in different teams, with different technological profiles. Therefore, it seems highly advisable to have well defined roles for each profile when they interact with each other.

At decide4AI, as experts in the development and implementation of predictive models and flexible software architectures in the cloud, we can advise you on: which pieces or types of architectures are more beneficial for your company, which techniques or models will be more suitable for your specific problem, which other technologies could help you improve your results, etc.

Interested in learning more about software architectures?

Do you want to learn more about decide4AI and keep up to date with future webinars or actions? Follow us on social networks (Linkedin, Twitter, Youtube).